Copernicus Returns

Ptolemy, Project Hail Mary, and macrodoses of humble pie

[Author’s note: here is my most recent essay about “The 193-Year-Old Roots of the Influencer Economy” in case you missed it.]

I. Revolutions, Literally

1,800 years ago, Claudius Ptolemy, a mathematician living in Egypt, followed in the footsteps of Aristotle, the Egyptians, and the Babylonians, by “confirming” that our intuitions were correct: obviously, we Earthlings were not moving. The Moon, Sun, and planets orbited a stationary Earth, and because planets did not move in perfect circles, Ptolemy became famous for accurately predicting their positions.

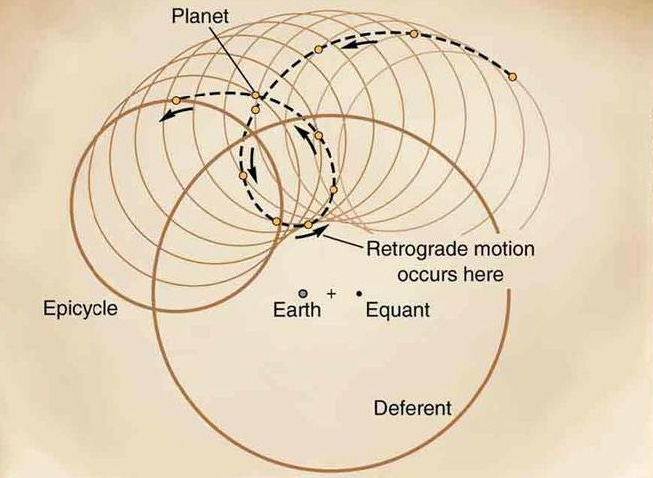

Despite having zero knowledge of the distant-future discoveries of gravity (Newton) and elliptical orbits (Kepler), Ptolemy’s model of celestial orbits became increasingly accurate because when predictions diverged from observations, he added additional smaller circles (called epicycles) to improve the model.1

(In a related story, the only requirement to graduate from kindergarten at my school was to pass a shoe-tying exam. Shamefully, knowing that I had zero chance to pass, I lied to my teacher about having “definitely already passed that, yep.” Much like Ptolemy, when I later faced shoelace disasters during indoor soccer games, I would just tie knots on knots on knots until things magically worked. There were evidently no Velcro cleats in Kentucky.)

Ptolemy’s model was so bizarrely accurate that it became the standard for well over 1,000 years. Columbus and crew would not have sailed westward to “Asia” had Ptolemy not dramatically underestimated the circumference of the Earth.2

Yet Ptolemy’s model was wrong. He was the original king of overfitting, which is when people wrongly infer causality because an explanation correlates too well with the data.

Fully 350 years before Ptolemy and 1,700 years before Copernicus, Aristarchus of Samos first proposed the heliocentric (Sun-at-center) idea. Aristarchus’s ideas were ignored for many reasons: without an understanding of inertia, a moving Earth would mean everything would be perpetually flung around.

It wasn’t until the invention of the telescope in 1608, and Galileo’s ensuing observations of Venus, that the “planets-orbit-the-Earth” model became difficult to defend.3

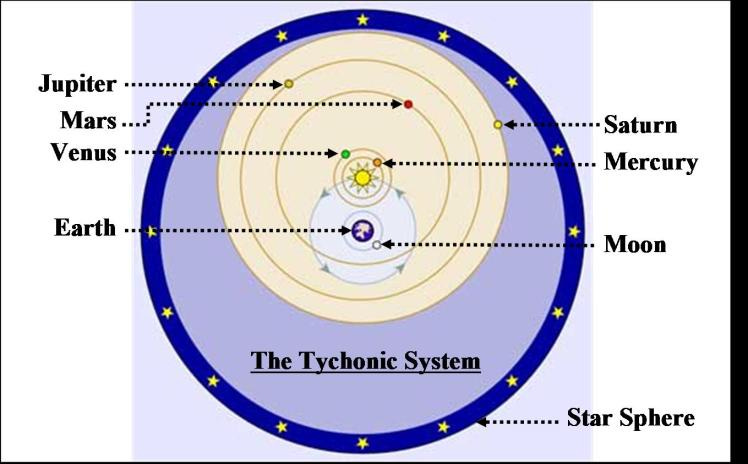

To cope with, but not fully accept, the mounting evidence of the heliocentric model, there was a 150-year period during which the wild Tychonic model (below) became a popular compromise. (A key holdup was that people still didn’t realize how far away stars were, so there was no reasonable explanation as to why stars didn’t meaningfully shift in the night sky if we orbited the Sun.)

Newton’s gravitational laws showed that the Sun, as the much larger body, couldn’t orbit the Earth, and then fellow Englishman James Bradley proved once and for all (via the “aberration of light”) that the Earth was not stationary. By 1822, even the Catholic Church removed its ban on heliocentric books.

The heliocentric shift is but one instance in a much larger and ongoing progression: the humbling of humanity.

II. A milli, a milli, a 200,000-milli-milli milli

History doesn’t repeat, but it rhymes. — Mark Twain (the internet attributes this quote, therefore he definitely said it)

Terence Tao, mathematician, former prodigy, and one of the smartest humans alive,4 recently shared a fascinating aside during a podcast interview:

Often progress has to be made not by adding more theories, but by deleting some assumptions that you have in your mind… Right now we’re going through a cognitive version of the Copernican revolution, where we used to think that human intelligence is the center of the universe, and now we’re seeing that there are very different types of intelligence out there with very different strengths and weaknesses.

While we scoff at the medieval, geocentric fools, Tao points out that we have our own blindspots today. Lost in the frequent panic about AI and creating super-human intelligence are some basic facts about human intelligence.

Here are three:

I. Humans are but one of 7–8 million estimated animal species on the planet, and we are scientifically apes. Non-apes like dolphins “have senses of empathy, altruism and attachment.” So too do non-animals: based on the communication networks in forests (“wood wide web” observations by scientists like Suzanne Simard), trees seem to be better sharers than your average human adult. Even specific fungi, when consumed, appear significantly more effective at treating PTSD than pharmaceuticals are.

II. For more than 99.99% of the Earth’s existence, there were no Homo sapiens, and there is limited evidence of complex language or symbolic thought before 100,000 years ago. If the history of the Earth were a calendar year, modern humans emerge 3–5 seconds before New Years. As Carl Sagan put it, we are “Johnny-come-latelys.”

III. In 1976, five years after David Bowie posed the question “Is there life on Mars?”, NASA’s Viking landers provided evidence of none. Beyond Mars, none of the Sun’s other planets appear capable of harboring life.5 In this solar system, the Earth is special.

One year later, however, NASA launched Voyager 1, which forced us to contemplate our definition of “special” as it flew far, far away.6 In 2012, it became the first space probe to leave our solar system. Voyager is headed towards the other 100–500 billion stars of the Milky Way, though it sadly lacks the speed to leave the Milky Way’s gravitational pull. It will never see the other 2 trillion galaxies in the observable universe, or their 200+ sextillion combined stars.

Human intelligence is made up of the same elements that permeate the universe: hydrogen, oxygen, carbon, and nitrogen (e.g. in DNA and RNA). Though humans rack our brains for reasons we haven’t seen intelligent life outside of Earth, it seems impossible that in the hundreds of sextillions of solar systems—comprised of the same fundamental elements as the Sun’s—we are the only intelligence.

In summary:

Humans are not the only intelligent species on Earth.

We are still brand new.

There are 200+ sextillion other stars that we haven’t seen, and they contain the same elements that gave rise to intelligent life on Earth.

Conclusion: Do not Fear Humble Pie

Just as our ancestors had to internalize that we aren’t physically at the center of the universe, modern humans have to internalize that we aren’t the intellectual center of the universe. (E.T. came in peace, and anyone who has seen the excellent movie Project Hail Mary is now cool with this thought.)

It’s reasonable to resent that AI development outpaces social reform, energy sustainability, and a more equal distribution of resources.

It’s unreasonable, however, when people shake their heads and say that we shouldn’t be trying to create “gods.” Implicit in that statement is the belief that anything surpassing human intelligence must be a god, instead of software running on chips in data centers. Our ancestors will see this egocentric belief of the singular importance of human intelligence in the same way that we see Ptolemy’s blindspots.7

The discovery that we orbit the Sun may have scared people and led to denial, but it changed nothing about the inherent value and meaning of human life, love, or religion. The same is true with silicon-based intelligence. We must plan for the future (repeatedly electing Golden Idol candidates does not count as planning) and ensure we’re prepared as technologies do alter our welfare and labor systems. Beyond that, we do not need to fear humble pie.

Ptolemy used other methods (beyond just drawing circles) to make the model match the data. See “equant” and “deferent” in that image.

To be clear, Columbus also confused Arabic and Roman miles and thought Asia was much larger, so Ptolemy was not the only source of his errors.

While Copernicus was a Church administrative employee and avoided controversy by waiting to publish his theories at his life’s end, Galileo attracted much more controversy. Since Galileo actually disproved the geocentric model, a court of the Church called the Roman Inquisition sent death threats and arrested him. They ultimately commuted his sentence to house arrest the very next day, which is where Galileo spent the final nine years of his life. Interestingly, almost no early scientists were actually executed for their scientific theories. Those astronomers who were burned at the stake, like Giordano Bruno, were guilty of religious blasphemy, unrepentant atheism, etc.

Here is how Scott Alexander, a brilliant psychiatrist and essayist himself, describes Terence Tao:

“I spend my time feeling intellectually inadequate compared to [Austin-based quantum computer scientist] Scott Aaronson. Scott Aaronson describes feeling “in awe” of Terence Tao and frequently struggling to understand him. Terence Tao – well, I don’t know if he’s religious, but maybe he feels intellectually inadequate compared to God. And God feels intellectually inadequate compared to John von Neumann.”

Some moons in our solar system might be able to harbor life.

Interestingly, Voyager 1 contains two copies of the “Voyager Golden Record” to help us communicate with non-human life in the galaxy.

If the rest of Earth’s life forms could talk, they’d all—dogs aside—point out that humanity’s ego about its own intelligence has actually been the problem all along. Perhaps the humble pie that comes with with AI will help us improve our treatment of other people and pigs and stuff. Cockroaches, however, can still f*** off.